Computational Human Dynamics (CHD)

Computational Human Dynamics (CHD) – Multimodal Social Signal Processing of Dyadic and Group Interactions

With the new project funding within the DFG Excellence Strategy for a Cross-Disciplinary Lab in the House of Computing and Data Science, Nale Lehmann-Willenbrock, Timo Gerkmann and Frank Steinicke lay the foundation for further interdisciplinary research. The two projects will further explore automatic group affect detection based on multimodal signals on the one hand, and the development of interaction patterns and social dynamics in the Metaverse on the otherhand. This research program makes it possible to answer scientific questions from the perspective of Computer Science and Industrial and Organizational Psychology that cannot be addressed with existing research methods.

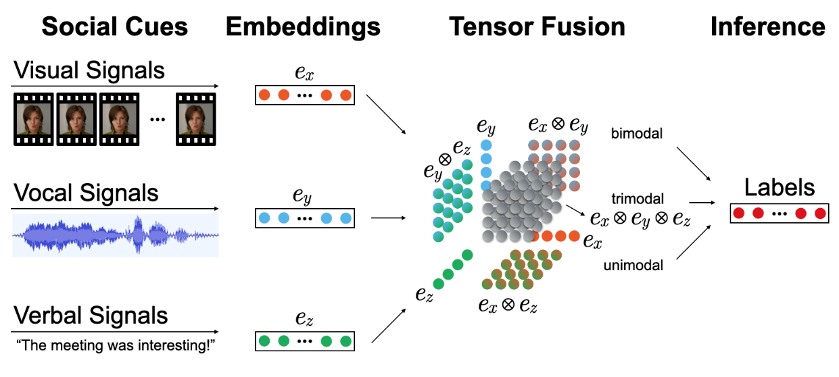

Project 1: Multimodal and Multilevel Modeling of Affect in Virtual Team Meetings

Virtual meetings are prone to numerous interaction challenges, such as the severe reduction of non-verbal cues and impaired emergence of affect. The relevance of the display of non-verbal cues and affect for understanding employee behavior, well-being, and for organizational functioning at large is profound. With respect to previous theorizing of affect in team meetings as a multilevel and multimodal construct, in this project, we will employ multimodal machine learning approaches that combine modalities, such as audio, video, and semantics, to model affect at multiple levels of interaction, i.e., individual-, dyad-, and group-level.

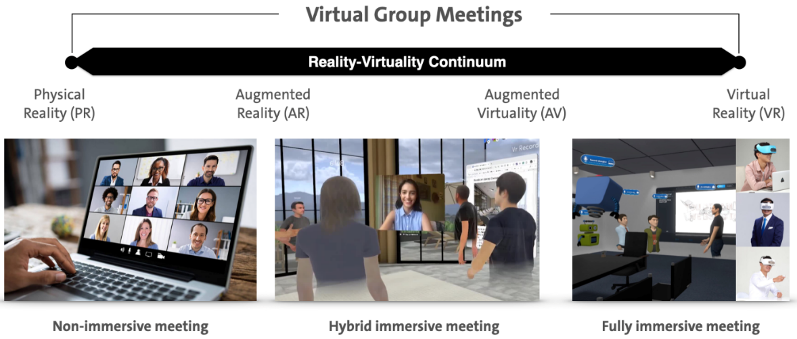

Project 2: Multimodal Social Signal Processing of Dyadic and Group Interactions in the Metaverse

Immersive group meetings in the Metaverse offer a rich context for detecting, analyzing, and understanding multimodal behavioral patterns. In the scope of the proposed project, we will analyze and evaluate existing libraries and tools for processing social signals in face-to-face (F2F) situations for their performance in and appropriateness for immersive virtual group meetings. Furthermore, we will adapt these tools, develop novel AI-based algorithms, and integrate them in a multimodal social signal processing framework for analyzing, processing, and annotating different forms of (non-) immersive virtual group meetings. This framework will provide the technological basis for investigating dyadic and group behavioral processes, as well as the associated dynamics.

Employees:

Navin Laxminarayanan Raj Prabhu, Fachbereich Informatik, Signal Processing, Universität Hamburg

Marvin Grabowski, Fachbereich Arbeits- und Organisationspsychologie, Universität Hamburg,

Principal Investigators:

Nale Lehmann-Willenbrock, Universität Hamburg, Fachbereich Arbeits- und Organisationspsychologie

Timo Gerkmann, Universität Hamburg, Fachbereich Informatik, Signal Processing

Frank Steinicke, Universität Hamburg, Fachbereich Informatik, Human-Computer Interaction